|

I am a Researcher and a Ph.D. candidate at Augmented Vision group, Deutsches Forschungszentrum für Künstliche Intelligenz (DFKI),

advised by Prof. Dr. Didier Stricker,

Dr. Alain Pagani,

and Dr. Vladislav Golyanik.

My research topic is 3D human pose estimation (including hands) and 3D scene reconstruction from

egocentric cameras. Previously, I obtained my MS at Saarland University (SIC) advised by Prof. Dr. Christian Theobalt on the topic of "3D Human Motion Capture from Egocentric Event Streams" and my BE from St. Joseph's Institute of Technology advised by Prof. Dr. C. Gnana Kousalya on the topic of "Visually Impaired Navigation With Artificial Intelligence".

E-mail: Christen.Millerdurai@dfki.de |

|

DFKI

4DQV

SIC

St.Joseph's Institute of Technology |

|

[Feb 2026] One paper got accepted in CVPR 2026.

|

|

My research focus lies at the intersection of Computer Vision, Computer Graphics and Robotics. In particular, I am interested in the following topics:

|

|

|

|

ReLaGS: Relational Language Gaussian SplattingYaxu Xie, Abdalla Arafa, Alireza Javanmardi, Christen Millerdurai, Jia Cheng Hu, Shaoxiang Wang, Alain Pagani, Didier StrickerComputer Vision and Pattern Recognition (CVPR), 2026 [Project page] [Paper] [Code] Achieving unified 3D perception and reasoning across tasks such as segmentation, retrieval, and relation understanding remains challenging, as existing methods are either object-centric or rely on costly training for inter-object reasoning. We present a novel framework that constructs a hierarchical language-distilled Gaussian scene and its 3D semantic scene graph without scene-specific training. A Gaussian pruning mechanism refines scene geometry, while a robust multi-view language alignment strategy aggregates noisy 2D features into accurate 3D object embeddings. On top of this hierarchy, we build an open-vocabulary 3D scene graph with Vision Language-derived annotations and Graph Neural Network-based relational reasoning. Our approach enables efficient and scalable open-vocabulary 3D reasoning by jointly modeling hierarchical semantics and inter/intra-object relationships, validated across tasks including open-vocabulary segmentation, scene graph generation, and relation-guided retrieval. |

|

TalkingPose: Efficient Face and Gesture Animation with Feedback-guided Diffusion ModelAlireza Javanmardi, Pragati Jaiswal, Tewodros Amberbir Habtegebrial, Christen Millerdurai, Shaoxiang Wang, Alain Pagani, Didier StrickerWinter Conference on Applications of Computer Vision (WACV), 2026 [Project page] [Paper] [Code] Recent advancements in diffusion models have significantly improved the realism and generalizability of character-driven animation, enabling the synthesis of high-quality motion from just a single RGB image and a set of driving poses. Nevertheless, generating temporally coherent long-form content remains challenging. Existing approaches are constrained by computational and memory limitations, as they are typically trained on short video segments, thus performing effectively only over limited frame lengths and hindering their potential for extended coherent generation. To address these constraints, we propose TalkingPose, a novel diffusion-based framework specifically designed for producing long-form, temporally consistent human upper-body animations. TalkingPose leverages driving frames to precisely capture expressive facial and hand movements, transferring these seamlessly to a target actor through a stable diffusion backbone. To ensure continuous motion and enhance temporal coherence, we introduce a feedback-driven mechanism built upon image-based diffusion models. |

|

Inpaint360GS: Efficient Object-Aware 3D Inpainting via Gaussian Splatting for 360° ScenesShaoxiang Wang, Shihong Zhang, Christen Millerdurai, Rüdiger Westermann, Didier Stricker, Alain PaganiWinter Conference on Applications of Computer Vision (WACV), 2026 [Project page] [Paper] [Code] Despite recent advances in single-object front-facing inpainting using NeRF and 3D Gaussian Splatting (3DGS), inpainting in complex 360◦ scenes remains largely underexplored. This is primarily due to three key challenges: (i) identifying target objects in the 3D field of 360° environments, (ii) dealing with severe occlusions in multi-object scenes, which makes it hard to define regions to inpaint, and (iii) maintaining consistent and high-quality appearance across views effectively. To tackle these challenges, we propose Inpaint360GS, a flexible 360◦ editing framework based on 3DGS that supports multi-object removal and high-fidelity inpainting in 3D space. By distilling 2D segmentation into 3D and leveraging virtual camera views for contextual guidance, our method enables accurate object-level editing and consistent scene completion. We further introduce a new dataset tailored for 360◦ inpainting, addressing the lack of ground truth object-free scenes. Experiments demonstrate that Inpaint360GS outperforms existing baselines and achieves state-of-the-art performance. |

|

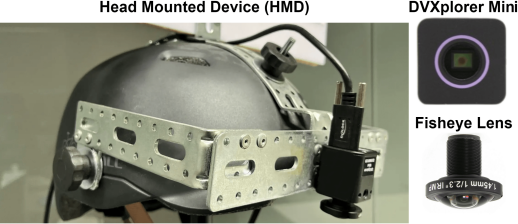

EventEgo3D++: 3D Human Motion Capture from a Head Mounted Event CameraChristen Millerdurai, Hiroyasu Akada, Jian Wang, Diogo Luvizon, Alain Pagani, Didier Stricker, Christian Theobalt, Vladislav GolyanikInternational Journal of Computer Vision (IJCV), 2025 [Project page] [Paper] [Code] This paper is an extension of our previous work "EventEgo3D (CVPR 2024)". We tackle a new problem, i.e. 3D human motion capture from an egocentric monocular event camera with a fisheye lens. "EventEgo3D (CVPR 2024)" proposed the EE3D framework that is specifically tailored for learning with event streams in the LNES representation, enabling high 3D reconstruction accuracy. We upgrade this framework to a new EE3D++ framework for further performance improvement. We also introduce a new dataset, EE3D-W, in addition to EE3D-S and EE3D-R from "EventEgo3D (CVPR 2024)". |

|

Uni-slam: Uncertainty-aware neural implicit slam for real-time dense indoor scene reconstructionShaoxiang Wang, Yaxu Xie, Chun-Peng Chang, Christen Millerdurai, Alain Pagani, Didier StrickerWinter Conference on Applications of Computer Vision (WACV), 2025 [Project page] [Paper] [Code] Neural implicit fields have recently emerged as a powerful representation method for multi-view surface reconstruction due to their simplicity and state-of-the-art performance. However, reconstructing thin structures of indoor scenes while ensuring real-time performance remains a challenge for dense visual SLAM systems. Previous methods do not consider varying quality of input RGB-D data and employ fixed-frequency mapping process to reconstruct the scene, which could result in the loss of valuable information in some frames. In this paper, we propose Uni-SLAM, a decoupled 3D spatial representation based on hash grids for indoor reconstruction. We introduce a novel defined predictive uncertainty to reweight the loss function, along with strategic local-to-global bundle adjustment. Experiments on synthetic and real-world datasets demonstrate that our system achieves state-of-the-art tracking and mapping accuracy while maintaining real-time performance. |

|

EventEgo3D: 3D Human Motion Capture from Egocentric Event StreamsChristen Millerdurai, Hiroyasu Akada, Jian Wang, Diogo Luvizon, Christian Theobalt, Vladislav GolyanikComputer Vision and Pattern Recognition (CVPR), 2024 [Project page] [Paper] [Code] We tackle a new problem, i.e. 3D human motion capture from an egocentric monocular event camera with a fisheye lens. Event streams have high temporal resolution and could provide reliable cues for 3D human motion capture under high-speed human motions and rapidly changing illumination. We leverage these characteristics and propose the first approach for event-based 3D human pose estimation, EventEgo3D (EE3D). The proposed EE3D framework is specifically tailored for learning with event streams in the LNES representation, enabling high 3D reconstruction accuracy. We also provide two new datasets, EE3D-S and EE3D-R. |

|

3D Pose Estimation of Two Interacting Hands from a Monocular Event CameraChristen Millerdurai, Diogo Luvizon, Viktor Rudnev, André Jonas, Jiayi Wang, Christian Theobalt, Vladislav GolyanikInternational Conference on 3D Vision (3DV), 2024, Spotlight [Project page] [Paper] [Code] 3D hand tracking from monocular video is hard due to low-light and fast motion. RGB methods often fail in these cases due to motion blur and low-dynamic range. Event cameras avoid these issues but differ radically from images, so existing techniques don’t transfer. We present the first framework to track two fast, interacting hands from a single event camera, using a semi‑supervised feature‑wise attention module to resolve left‑right ambiguity and an intersection loss to prevent collisions. We release two datasets: Ev2Hands‑S (synthetic) and Ev2Hands‑R (real events with 3D ground truth). Our method improves 3D reconstruction accuracy and generalizes to real, low‑light conditions. |

|

Experience: [Apr 2023 - Sep 2023] ML Intern, AUDI AG. (Ingolstadt, Germany) [Sep 2022 - Mar 2023] Research Intern, Max Planck Institute for Informatics (Saarbrucken, Germany) [Jun 2022 - Aug 2022] ML Intern, StarryAI.com. (Remote) [Oct 2018 - Dec 2020] Engineer - II, Signzy. (Bangalore, India) [Jun 2018 - Sep 2018] ML Intern, Signzy. (Bangalore, India) [Jan 2018 - May 2018] Intern, Amazon.com, Inc. (Chennai, India)

Education:

[Feb 2024 - Present] Ph.D. student, DFKI (Kaiserslautern, Germany) |

|

Thesis Students:

Teaching:

Reviewer Experience:

|

|

© Christen Millerdurai / Design: jonbarron Icons: Freepik - Flaticon |